西安做网站的网络公司seo网址超级外链工具

目录

引言:

1.所有文件展示:

1.中文停用词数据(hit_stopwords.txt)来源于:

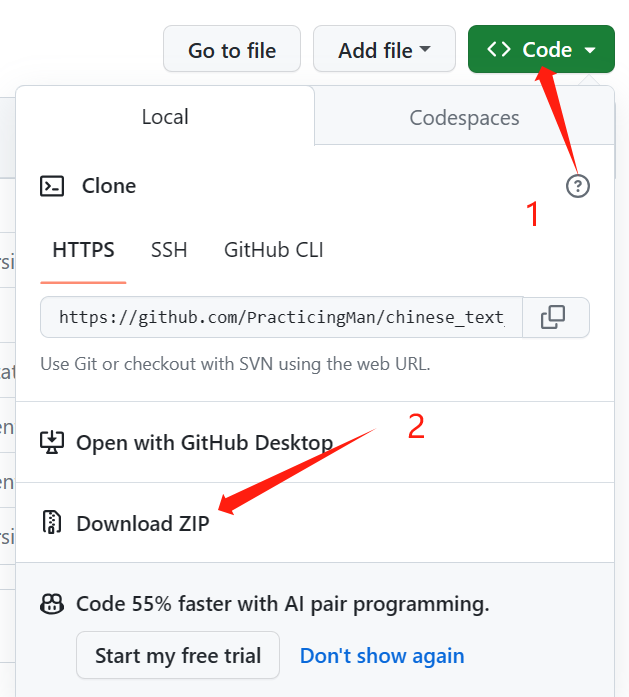

2.其中data数据集为chinese_text_cnn-master.zip提取出的文件。点击链接进入github,点击Code、Download ZIP即可下载。

2.安装依赖库:

3.数据预处理(data_set.py):

train.txt-去除停用词后的训练集文件:

test.txt -去除停用词后的测试集文件:

4. 模型训练以及保存(main.py)

1.LSTM模型搭建:

2.main.py代价展示 :

3.模型保存

4.训练结果

5.LSTM模型测试(test.py)

1.测试结果:

2.测试结果:

6.完整代码展示:

1.data_set.py

2.mian.py

3.test.py

引言:

在当今数字化时代,人们在社交媒体、评论平台以及各类在线交流中产生了海量的文本数据。这些数据蕴含着丰富的情感信息,从而成为了深入理解用户态度、市场趋势,甚至社会情绪的宝贵资源。自然语言处理(NLP)的发展为我们提供了强大的工具,使得对文本情感进行分析成为可能。在这个领域中,长短时记忆网络(LSTM)凭借其能够捕捉文本序列中长距离依赖关系的能力,成为了情感分析任务中的一项重要技术。

本篇博客将手把手地教你如何使用LSTM网络实现中文文本情感分析。我们将从数据预处理开始,逐步构建一个端到端的情感分析模型。通过详细的步骤和示例代码,深入了解如何处理中文文本数据、构建LSTM模型、进行训练和评估。

1.所有文件展示:

1.中文停用词数据(hit_stopwords.txt)来源于:

项目目录预览 - stopwords - GitCode

2.其中data数据集为chinese_text_cnn-master.zip提取出的文件。点击链接进入github,点击Code、Download ZIP即可下载。

2.安装依赖库:

pip install torch # 搭建LSTM模型

pip install gensim # 中文文本词向量转换

pip install numpy # 数据清洗、预处理

pip install pandas

3.数据预处理(data_set.py):

# -*- coding: utf-8 -*-

# @Time : 2023/11/15 10:52

# @Author :Muzi

# @File : data_set.py

# @Software: PyCharm

import pandas as pd

import jieba# 数据读取

def load_tsv(file_path):data = pd.read_csv(file_path, sep='\t')data_x = data.iloc[:, -1]data_y = data.iloc[:, 1]return data_x, data_ytrain_x, train_y = load_tsv("./data/train.tsv")

test_x, test_y = load_tsv("./data/test.tsv")

train_x=[list(jieba.cut(x)) for x in train_x]

test_x=[list(jieba.cut(x)) for x in test_x]with open('./hit_stopwords.txt','r',encoding='UTF8') as f:stop_words=[word.strip() for word in f.readlines()]print('Successfully')

def drop_stopword(datas):for data in datas:for word in data:if word in stop_words:data.remove(word)return datasdef save_data(datax,path):with open(path, 'w', encoding="UTF8") as f:for lines in datax:for i, line in enumerate(lines):f.write(str(line))# 如果不是最后一行,就添加一个逗号if i != len(lines) - 1:f.write(',')f.write('\n')if __name__ == '__main':train_x=drop_stopword(train_x)test_x=drop_stopword(test_x)save_data(train_x,'./train.txt')save_data(test_x,'./test.txt')print('Successfully')train.txt-去除停用词后的训练集文件:

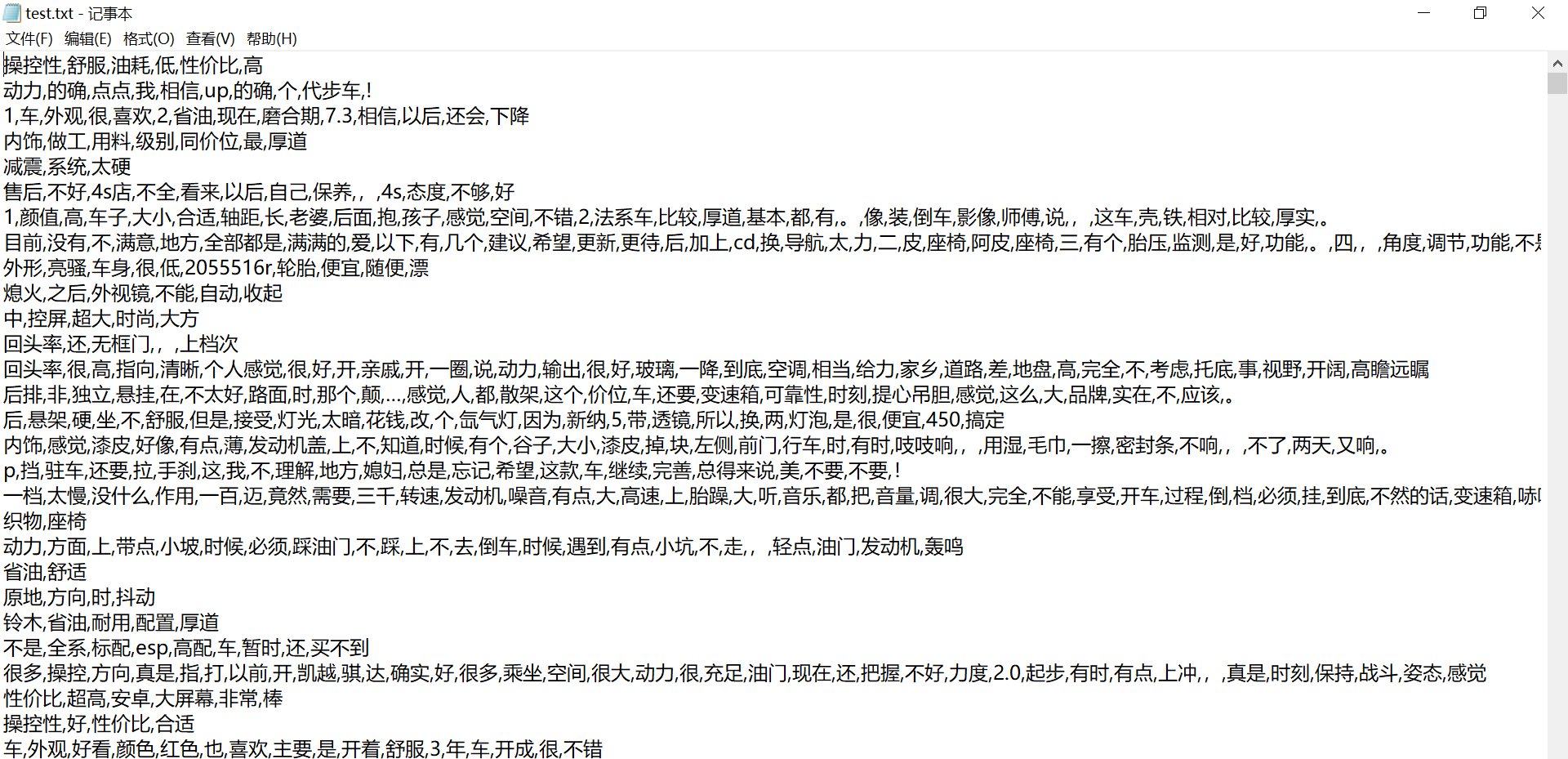

test.txt -去除停用词后的测试集文件:

4. 模型训练以及保存(main.py)

1.LSTM模型搭建:

不同的数据集应该有不同的分类标准,我这里用到的数据模型属于二分类问题

# 定义LSTM模型

class LSTMModel(nn.Module):def __init__(self, input_size, hidden_size, output_size):super(LSTMModel, self).__init__()self.lstm = nn.LSTM(input_size, hidden_size, batch_first=True)self.fc = nn.Linear(hidden_size, output_size)def forward(self, x):lstm_out, _ = self.lstm(x)output = self.fc(lstm_out[:, -1, :]) # 取序列的最后一个输出return output# 定义模型

input_size = word2vec_model.vector_size

hidden_size = 50 # 你可以根据需要调整隐藏层大小

output_size = 2 # 输出的大小,根据你的任务而定model = LSTMModel(input_size, hidden_size, output_size)

# 定义损失函数和优化器

criterion = nn.CrossEntropyLoss() # 交叉熵损失函数

optimizer = torch.optim.Adam(model.parameters(), lr=0.0002)2.main.py代价展示 :

# -*- coding: utf-8 -*-

# @Time : 2023/11/13 20:31

# @Author :Muzi

# @File : mian.py.py

# @Software: PyCharm

import pandas as pd

import torch

from torch import nn

import jieba

from gensim.models import Word2Vec

import numpy as np

from data_set import load_tsv

from torch.utils.data import DataLoader, TensorDataset# 数据读取

def load_txt(path):with open(path,'r',encoding='utf-8') as f:data=[[line.strip()] for line in f.readlines()]return datatrain_x=load_txt('train.txt')

test_x=load_txt('test.txt')

train=train_x+test_x

X_all=[i for x in train for i in x]_, train_y = load_tsv("./data/train.tsv")

_, test_y = load_tsv("./data/test.tsv")

# 训练Word2Vec模型

word2vec_model = Word2Vec(sentences=X_all, vector_size=100, window=5, min_count=1, workers=4)# 将文本转换为Word2Vec向量表示

def text_to_vector(text):vector = [word2vec_model.wv[word] for word in text if word in word2vec_model.wv]return sum(vector) / len(vector) if vector else [0] * word2vec_model.vector_sizeX_train_w2v = [[text_to_vector(text)] for line in train_x for text in line]

X_test_w2v = [[text_to_vector(text)] for line in test_x for text in line]# 将词向量转换为PyTorch张量

X_train_array = np.array(X_train_w2v, dtype=np.float32)

X_train_tensor = torch.Tensor(X_train_array)

X_test_array = np.array(X_test_w2v, dtype=np.float32)

X_test_tensor = torch.Tensor(X_test_array)

#使用DataLoader打包文件

train_dataset = TensorDataset(X_train_tensor, torch.LongTensor(train_y))

train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)

test_dataset = TensorDataset(X_test_tensor,torch.LongTensor(test_y))

test_loader = DataLoader(test_dataset, batch_size=64, shuffle=True)

# 定义LSTM模型

class LSTMModel(nn.Module):def __init__(self, input_size, hidden_size, output_size):super(LSTMModel, self).__init__()self.lstm = nn.LSTM(input_size, hidden_size, batch_first=True)self.fc = nn.Linear(hidden_size, output_size)def forward(self, x):lstm_out, _ = self.lstm(x)output = self.fc(lstm_out[:, -1, :]) # 取序列的最后一个输出return output# 定义模型

input_size = word2vec_model.vector_size

hidden_size = 50 # 你可以根据需要调整隐藏层大小

output_size = 2 # 输出的大小,根据你的任务而定model = LSTMModel(input_size, hidden_size, output_size)

# 定义损失函数和优化器

criterion = nn.CrossEntropyLoss() # 交叉熵损失函数

optimizer = torch.optim.Adam(model.parameters(), lr=0.0002)if __name__ == "__main__":# 训练模型num_epochs = 10log_interval = 100 # 每隔100个批次输出一次日志loss_min=100for epoch in range(num_epochs):model.train()for batch_idx, (data, target) in enumerate(train_loader):outputs = model(data)loss = criterion(outputs, target)optimizer.zero_grad()loss.backward()optimizer.step()if batch_idx % log_interval == 0:print('Epoch [{}/{}], Batch [{}/{}], Loss: {:.4f}'.format(epoch + 1, num_epochs, batch_idx, len(train_loader), loss.item()))# 保存最佳模型if loss.item()<loss_min:loss_min=loss.item()torch.save(model, 'model.pth')# 模型评估with torch.no_grad():model.eval()correct = 0total = 0for data, target in test_loader:outputs = model(data)_, predicted = torch.max(outputs.data, 1)total += target.size(0)correct += (predicted == target).sum().item()accuracy = correct / totalprint('Test Accuracy: {:.2%}'.format(accuracy))

3.模型保存

# 保存最佳模型if loss.item()<loss_min:loss_min=loss.item()torch.save(model, 'model.pth')4.训练结果

5.LSTM模型测试(test.py)

# -*- coding: utf-8 -*-

# @Time : 2023/11/15 15:53

# @Author :Muzi

# @File : test.py.py

# @Software: PyCharm

import torch

import jieba

from torch import nn

from gensim.models import Word2Vec

import numpy as npclass LSTMModel(nn.Module):def __init__(self, input_size, hidden_size, output_size):super(LSTMModel, self).__init__()self.lstm = nn.LSTM(input_size, hidden_size, batch_first=True)self.fc = nn.Linear(hidden_size, output_size)def forward(self, x):lstm_out, _ = self.lstm(x)output = self.fc(lstm_out[:, -1, :]) # 取序列的最后一个输出return output# 数据读取

def load_txt(path):with open(path,'r',encoding='utf-8') as f:data=[[line.strip()] for line in f.readlines()]return data#去停用词

def drop_stopword(datas):# 假设你有一个函数用于预处理文本数据with open('./hit_stopwords.txt', 'r', encoding='UTF8') as f:stop_words = [word.strip() for word in f.readlines()]datas=[x for x in datas if x not in stop_words]return datasdef preprocess_text(text):text=list(jieba.cut(text))text=drop_stopword(text)return text# 将文本转换为Word2Vec向量表示

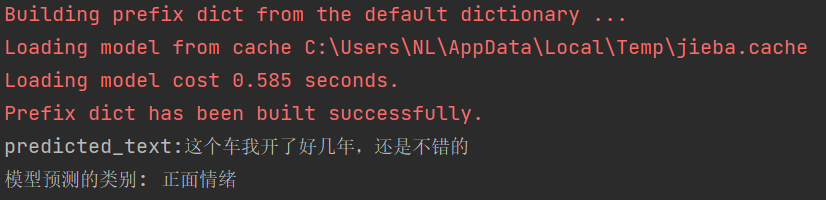

def text_to_vector(text):train_x = load_txt('train.txt')test_x = load_txt('test.txt')train = train_x + test_xX_all = [i for x in train for i in x]# 训练Word2Vec模型word2vec_model = Word2Vec(sentences=X_all, vector_size=100, window=5, min_count=1, workers=4)vector = [word2vec_model.wv[word] for word in text if word in word2vec_model.wv]return sum(vector) / len(vector) if vector else [0] * word2vec_model.vector_sizeif __name__ == '__main__':# input_text = "这个车完全就是垃圾,又热又耗油"input_text = "这个车我开了好几年,还是不错的"label = {1: "正面情绪", 0: "负面情绪"}model = torch.load('model.pth')# 预处理输入数据input_data = preprocess_text(input_text)# 确保输入词向量与模型维度和数据类型相同input_data=[[text_to_vector(input_data)]]input_arry= np.array(input_data, dtype=np.float32)input_tensor = torch.Tensor(input_arry)# 将输入数据传入模型with torch.no_grad():output = model(input_tensor)predicted_class = label[torch.argmax(output).item()]print(f"predicted_text:{input_text}")print(f"模型预测的类别: {predicted_class}")1.测试结果:

2.测试结果:

6.完整代码展示:

1.data_set.py

import pandas as pd

import jieba# 数据读取

def load_tsv(file_path):data = pd.read_csv(file_path, sep='\t')data_x = data.iloc[:, -1]data_y = data.iloc[:, 1]return data_x, data_ywith open('./hit_stopwords.txt','r',encoding='UTF8') as f:stop_words=[word.strip() for word in f.readlines()]print('Successfully')

def drop_stopword(datas):for data in datas:for word in data:if word in stop_words:data.remove(word)return datasdef save_data(datax,path):with open(path, 'w', encoding="UTF8") as f:for lines in datax:for i, line in enumerate(lines):f.write(str(line))# 如果不是最后一行,就添加一个逗号if i != len(lines) - 1:f.write(',')f.write('\n')if __name__ == '__main':train_x, train_y = load_tsv("./data/train.tsv")test_x, test_y = load_tsv("./data/test.tsv")train_x = [list(jieba.cut(x)) for x in train_x]test_x = [list(jieba.cut(x)) for x in test_x]train_x=drop_stopword(train_x)test_x=drop_stopword(test_x)save_data(train_x,'./train.txt')save_data(test_x,'./test.txt')print('Successfully')2.mian.py

import pandas as pd

import torch

from torch import nn

import jieba

from gensim.models import Word2Vec

import numpy as np

from data_set import load_tsv

from torch.utils.data import DataLoader, TensorDataset# 数据读取

def load_txt(path):with open(path,'r',encoding='utf-8') as f:data=[[line.strip()] for line in f.readlines()]return datatrain_x=load_txt('train.txt')

test_x=load_txt('test.txt')

train=train_x+test_x

X_all=[i for x in train for i in x]_, train_y = load_tsv("./data/train.tsv")

_, test_y = load_tsv("./data/test.tsv")

# 训练Word2Vec模型

word2vec_model = Word2Vec(sentences=X_all, vector_size=100, window=5, min_count=1, workers=4)# 将文本转换为Word2Vec向量表示

def text_to_vector(text):vector = [word2vec_model.wv[word] for word in text if word in word2vec_model.wv]return sum(vector) / len(vector) if vector else [0] * word2vec_model.vector_sizeX_train_w2v = [[text_to_vector(text)] for line in train_x for text in line]

X_test_w2v = [[text_to_vector(text)] for line in test_x for text in line]# 将词向量转换为PyTorch张量

X_train_array = np.array(X_train_w2v, dtype=np.float32)

X_train_tensor = torch.Tensor(X_train_array)

X_test_array = np.array(X_test_w2v, dtype=np.float32)

X_test_tensor = torch.Tensor(X_test_array)

#使用DataLoader打包文件

train_dataset = TensorDataset(X_train_tensor, torch.LongTensor(train_y))

train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)

test_dataset = TensorDataset(X_test_tensor,torch.LongTensor(test_y))

test_loader = DataLoader(test_dataset, batch_size=64, shuffle=True)

# 定义LSTM模型

class LSTMModel(nn.Module):def __init__(self, input_size, hidden_size, output_size):super(LSTMModel, self).__init__()self.lstm = nn.LSTM(input_size, hidden_size, batch_first=True)self.fc = nn.Linear(hidden_size, output_size)def forward(self, x):lstm_out, _ = self.lstm(x)output = self.fc(lstm_out[:, -1, :]) # 取序列的最后一个输出return output# 定义模型

input_size = word2vec_model.vector_size

hidden_size = 50 # 你可以根据需要调整隐藏层大小

output_size = 2 # 输出的大小,根据你的任务而定model = LSTMModel(input_size, hidden_size, output_size)

# 定义损失函数和优化器

criterion = nn.CrossEntropyLoss() # 交叉熵损失函数

optimizer = torch.optim.Adam(model.parameters(), lr=0.0002)if __name__ == "__main__":# 训练模型num_epochs = 10log_interval = 100 # 每隔100个批次输出一次日志loss_min=100for epoch in range(num_epochs):model.train()for batch_idx, (data, target) in enumerate(train_loader):outputs = model(data)loss = criterion(outputs, target)optimizer.zero_grad()loss.backward()optimizer.step()if batch_idx % log_interval == 0:print('Epoch [{}/{}], Batch [{}/{}], Loss: {:.4f}'.format(epoch + 1, num_epochs, batch_idx, len(train_loader), loss.item()))# 保存最佳模型if loss.item()<loss_min:loss_min=loss.item()torch.save(model, 'model.pth')# 模型评估with torch.no_grad():model.eval()correct = 0total = 0for data, target in test_loader:outputs = model(data)_, predicted = torch.max(outputs.data, 1)total += target.size(0)correct += (predicted == target).sum().item()accuracy = correct / totalprint('Test Accuracy: {:.2%}'.format(accuracy))3.test.py

import torch

import jieba

from torch import nn

from gensim.models import Word2Vec

import numpy as npclass LSTMModel(nn.Module):def __init__(self, input_size, hidden_size, output_size):super(LSTMModel, self).__init__()self.lstm = nn.LSTM(input_size, hidden_size, batch_first=True)self.fc = nn.Linear(hidden_size, output_size)def forward(self, x):lstm_out, _ = self.lstm(x)output = self.fc(lstm_out[:, -1, :]) # 取序列的最后一个输出return output# 数据读取

def load_txt(path):with open(path,'r',encoding='utf-8') as f:data=[[line.strip()] for line in f.readlines()]return data#去停用词

def drop_stopword(datas):# 假设你有一个函数用于预处理文本数据with open('./hit_stopwords.txt', 'r', encoding='UTF8') as f:stop_words = [word.strip() for word in f.readlines()]datas=[x for x in datas if x not in stop_words]return datasdef preprocess_text(text):text=list(jieba.cut(text))text=drop_stopword(text)return text# 将文本转换为Word2Vec向量表示

def text_to_vector(text):train_x = load_txt('train.txt')test_x = load_txt('test.txt')train = train_x + test_xX_all = [i for x in train for i in x]# 训练Word2Vec模型word2vec_model = Word2Vec(sentences=X_all, vector_size=100, window=5, min_count=1, workers=4)vector = [word2vec_model.wv[word] for word in text if word in word2vec_model.wv]return sum(vector) / len(vector) if vector else [0] * word2vec_model.vector_sizeif __name__ == '__main__':input_text = "这个车完全就是垃圾,又热又耗油"# input_text = "这个车我开了好几年,还是不错的"label = {1: "正面情绪", 0: "负面情绪"}model = torch.load('model.pth')# 预处理输入数据input_data = preprocess_text(input_text)# 确保输入词向量与模型维度和数据类型相同input_data=[[text_to_vector(input_data)]]input_arry= np.array(input_data, dtype=np.float32)input_tensor = torch.Tensor(input_arry)# 将输入数据传入模型with torch.no_grad():output = model(input_tensor)# 这里只一个简单的示例predicted_class = label[torch.argmax(output).item()]print(f"predicted_text:{input_text}")print(f"模型预测的类别: {predicted_class}")